Introduction

Are deepfakes just viral internet pranks?

Or are they one of the most serious cybersecurity threats facing businesses today?

At Silverback Consulting, we see firsthand how quickly deepfakes are evolving from novelty to weapon. If your organization relies on email, video conferencing, voice calls, or digital identity, you are already in the crosshairs.

This guide answers the most critical questions about deepfakes, breaks down the risks, and outlines exactly how we help organizations defend against them.

Deepfakes Explained: Risks, Detection & Prevention ▼

What Are Deepfakes and Why Should Businesses Care?

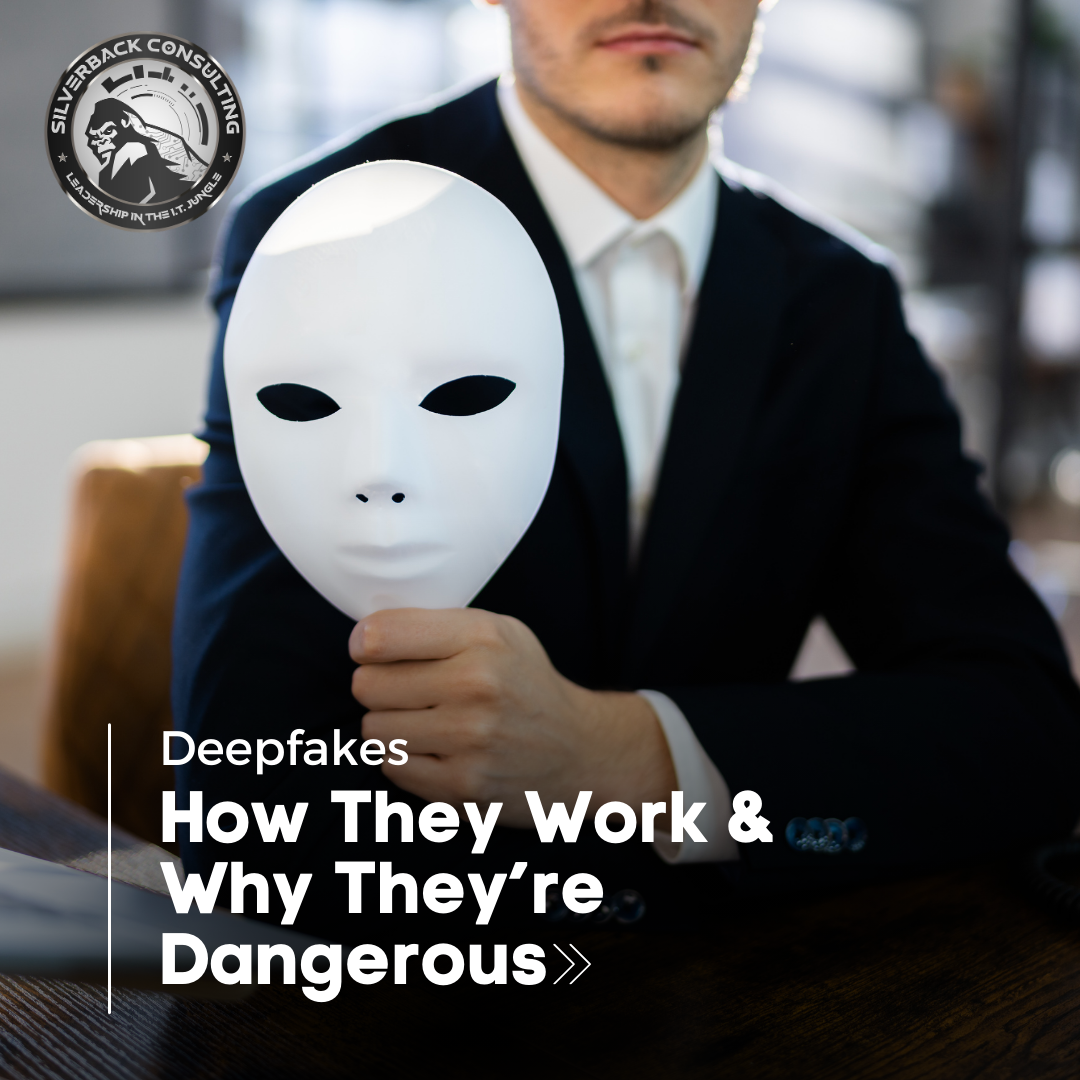

Deepfakes are synthetic media: video, audio, or images, generated using artificial intelligence to impersonate real people with alarming accuracy.

The term combines “deep learning” and “fake,” referring to AI models trained to replicate facial expressions, voice patterns, and even speech cadence.

But here’s the real question:

What happens when a deepfake isn’t entertainment, but an executive giving fraudulent instructions?

We’re no longer talking about harmless memes. We’re talking about:

- Fake CEO voice messages requesting wire transfers

- AI-generated video calls impersonating company leadership

- Fabricated vendor communications

- Synthetic media used for blackmail or reputational damage

For small and mid-sized businesses, the financial and reputational impact can be devastating.

How Do Deepfakes Actually Work?

Understanding the mechanics of deepfakes reveals why they are so dangerous.

AI models, often Generative Adversarial Networks (GANs) or advanced diffusion models are trained on large volumes of:

- Public videos

- Social media clips

- Interviews

- Voice recordings

With enough data, the AI can convincingly recreate:

- Facial movements

- Micro-expressions

- Speech tone and rhythm

- Natural pauses and emotional inflections

The result? A fabricated identity that can pass quick scrutiny.

And as AI tools become more accessible, creating deepfakes no longer requires advanced technical expertise.

What once demanded specialized skill can now be executed with consumer-level software.

Why Are Deepfakes a Growing Cybersecurity Threat?

Deepfakes are powerful because they exploit trust.

Traditional phishing relies on suspicious emails. Deepfake attacks rely on believability.

Consider these real-world attack patterns:

1. Executive Impersonation Fraud

An attacker uses a deepfake voice to call accounting, requesting an urgent transfer. The voice matches the CEO’s cadence perfectly. The urgency feels authentic. Money is wired before verification.

2. Deepfake Video Conference Attacks

Fraudsters inject AI-generated video into virtual meetings. Employees believe they are speaking to leadership, but they are interacting with a digital forgery.

3. Social Engineering at Scale

Deepfakes amplify traditional social engineering by adding emotional realism. Seeing or hearing a trusted authority overrides skepticism.

4. Reputational Sabotage

Synthetic videos can be released publicly to damage a company’s brand. Even after being proven fake, the reputational damage often lingers.

This is no longer theoretical. Deepfake-related fraud has already cost companies millions.

How Can You Detect Deepfakes?

Many business owners ask:

Can we spot deepfakes with the naked eye?

Sometimes. But detection is becoming harder.

Common indicators may include:

- Slight lip-sync mismatches

- Unnatural blinking patterns

- Audio distortions or inconsistent background noise

- Lighting inconsistencies

However, advanced deepfakes are increasingly seamless.

That’s why detection must go beyond human observation.

At Silverback Consulting, we emphasize layered defense strategies:

- Multi-factor authentication (MFA)

- Strict verification protocols for financial requests

- Email authentication standards (SPF, DKIM, DMARC)

- Security awareness training

- Behavioral anomaly detection

Technology alone is not enough. Process and policy matter just as much.

Are Small Businesses Targets for Deepfakes?

Absolutely.

Attackers often prefer smaller organizations because:

- They may lack advanced security controls

- Verification processes are informal

- Staff may not receive advanced cybersecurity training

- Leadership is more accessible online

Deepfakes do not discriminate based on company size. In fact, mid-sized businesses are increasingly targeted because they have valuable financial assets but weaker defenses.

How We Help Protect Against Deepfakes

At Silverback Consulting, we approach deepfake protection as part of a broader cyber risk management strategy.

1. Email and Communication Security

Deepfakes often work alongside phishing campaigns. We deploy advanced email filtering, impersonation protection, and domain monitoring.

2. Zero Trust Principles

Trust must be verified. Every access request, every instruction, every identity is validated regardless of hierarchy.

3. Incident Response Planning

If a deepfake attack occurs, time is critical. We ensure rapid containment, forensic analysis, and communication strategy execution.

Our goal is simple: eliminate blind trust and replace it with structured verification.

What Industries Are Most at Risk from Deepfakes?

Certain sectors face elevated exposure:

- Healthcare – impersonation of physicians or executives

- Finance – wire fraud and financial manipulation

- Legal – falsified client communications

- Manufacturing – vendor payment redirection schemes

- Professional Services – reputational attacks

If your business operates in regulated industries, the compliance implications of deepfake fraud can be severe.

How Do Deepfakes Impact Compliance and Liability?

Deepfakes create risk beyond immediate financial loss.

Consider the compliance exposure:

- Failure to protect financial controls

- Insufficient verification procedures

- Data breach implications

- Insurance coverage disputes

Regulators increasingly expect organizations to address emerging AI threats as part of reasonable cybersecurity controls.

Ignoring deepfakes is no longer defensible.

How Can Businesses Build Deepfake Resilience Today?

The most effective defense strategy answers three questions:

1. Can we verify identity independently?

Every high-risk request must require secondary confirmation via a separate channel.

2. Do employees know what deepfakes look and sound like?

Training must evolve alongside AI threats.

3. Do we have layered security controls?

No single tool stops deepfakes. Defense must combine:

- Policy

- Technology

- Human awareness

- Incident readiness

Resilience comes from structure — not assumption. The most effective defense strategy answers three questions:

1. Can we verify identity independently?

Every high-risk request must require secondary confirmation via a separate channel.

2. Do employees know what deepfakes look and sound like?

Training must evolve alongside AI threats.

3. Do we have layered security controls?

No single tool stops deepfakes. Defense must combine:

- Policy

- Technology

- Human awareness

- Incident readiness

Resilience comes from structure — not assumption.

The Future of Deepfakes: What’s Next?

Deepfakes will continue advancing in:

- Real-time generation

- Hyper-realistic voice cloning

- AI-driven conversational agents

- Automated impersonation campaigns

As artificial intelligence becomes more integrated into daily communication, distinguishing the real from the synthetic will become increasingly complex.

Businesses that act now will maintain control. Those that delay will react under pressure.

Why Trust Silverback Consulting?

We specialize in protecting organizations from evolving cyber threats — including AI-driven attacks like deepfakes.

Our approach combines:

- Technical controls

- Policy enforcement

- Compliance alignment

- Executive-level strategy

- Continuous monitoring

We do not rely on fear-based messaging. We implement structured, practical defenses that scale with your organization.

Deepfakes are not a future threat. They are a present reality.

If you operate a business that depends on trust, communication, and financial integrity, you must assume attackers are already studying your digital footprint.

We help you close those gaps before they are exploited.

Conclusion

The question is no longer:

“Are deepfakes real?”

The real question is:

Are you prepared to verify what looks and sounds real?

Organizations that implement verification frameworks, layered security, and ongoing employee training will remain resilient in the age of synthetic media.

At Silverback Consulting, we help businesses transform uncertainty into control and risk into structured defense.

What is a deepfake?

A deepfake is AI-generated synthetic media: video, audio, or images designed to realistically imitate a real person’s face, voice, or actions. Deepfakes use artificial intelligence to create convincing but fabricated content.

Are deepfakes illegal?

Deepfakes are not automatically illegal. However, they become illegal when used for fraud, impersonation, harassment, defamation, election interference, or other criminal activities. Many states are introducing laws to regulate malicious deepfake use.

When did deepfakes start?

Deepfakes began gaining attention around 2017, when online communities started using AI tools to swap faces in videos. Since then, the technology has rapidly advanced and become more accessible.

What are deepfakes used for?

Deepfakes are used for entertainment, film production, marketing, and education, but they are also used in cybercrime, including executive impersonation scams, financial fraud, social engineering attacks, and reputational damage campaigns.

How do deepfakes work?

Deepfakes use deep learning models trained on large amounts of video, image, or voice data. These AI systems analyze patterns in facial expressions and speech to generate synthetic content that mimics a real person.

Can deepfakes be detected?

Yes, deepfakes can sometimes be detected through visual inconsistencies, audio distortions, or behavioral anomalies. However, advanced deepfakes are increasingly difficult to identify without specialized detection tools and verification processes.

How can I detect deepfakes in videos automatically?

Automatic detection tools use AI-based forensic analysis to identify irregularities in facial movements, blinking patterns, pixel artifacts, and audio synchronization. Businesses should combine automated detection software with strict identity verification procedures.

What kind of cybersecurity solutions protect against deepfake scams?

Effective protection includes multi-factor authentication (MFA), zero-trust security models, financial verification protocols, email impersonation protection, employee security awareness training, and incident response planning.

To protect your business from deepfake fraud and AI-driven impersonation attacks, contact Silverback Consulting today at SilverbackConsulting.us or call (719) 452-2205.

Think You Could Spot a Deepfake?

Don’t Bet Your Business on It.

Deepfakes are evolving fast and one convincing fake call or video could cost your business everything. Let Silverback Consulting help you put real verification and layered protection in place before it’s too late. Call us today at (719) 452-2205 to strengthen your defenses.

Silverback Consulting

303 South Santa Fe Ave

Pueblo, CO 81003

support@silverbackconsulting.us

“Leadership in the I.T. Jungle”