Introduction

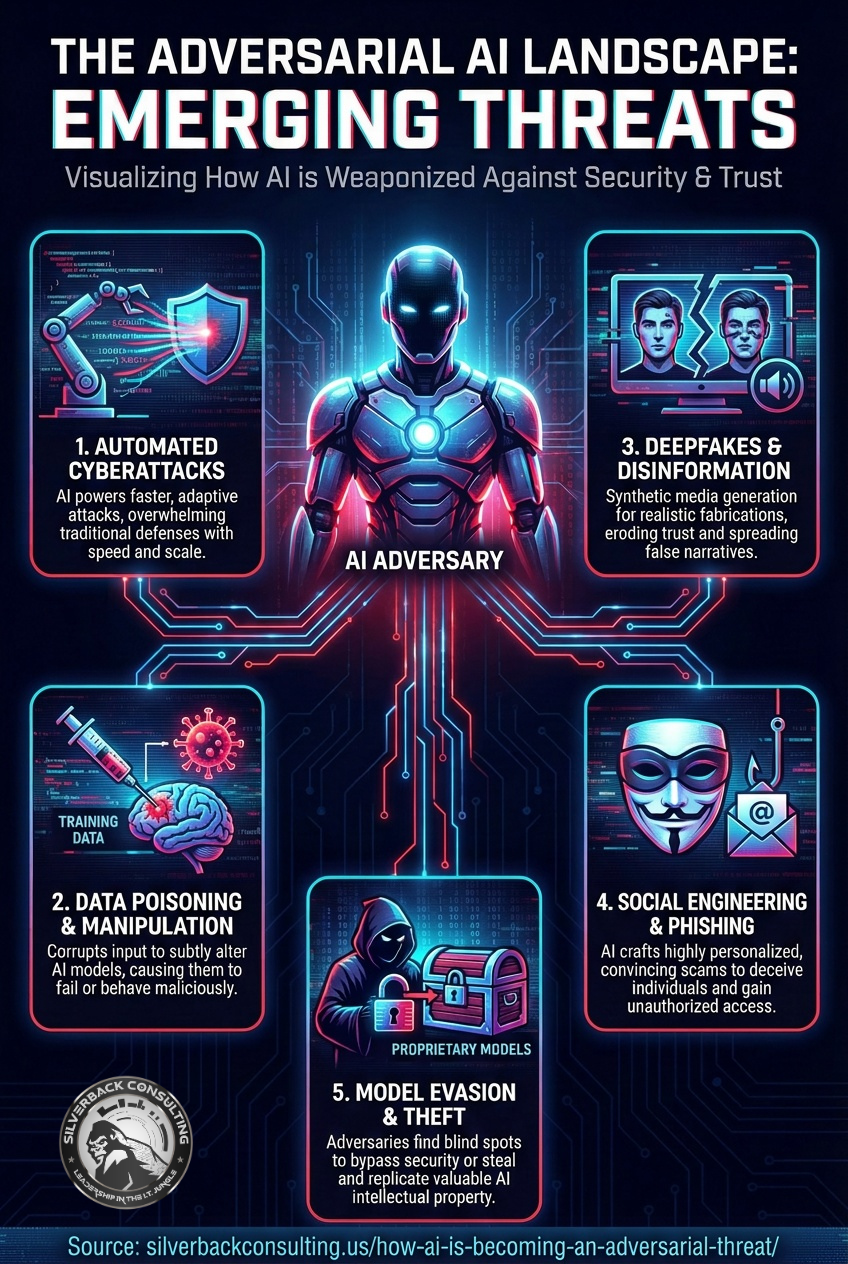

Artificial intelligence was once viewed primarily as a defensive advantage—helping organizations detect anomalies, automate responses, and strengthen security operations.

Today, AI cybersecurity threats are changing how both defenders and attackers operate. Today, that narrative is changing.

AI is increasingly being weaponized by cybercriminals, nation-state actors, and fraud rings, turning it into a powerful adversarial threat.

This shift isn’t theoretical. It’s already happening, and it’s reshaping the cybersecurity landscape faster than many organizations are prepared for.

How Hackers Are Using AI Against Businesses ▼

From Tool to Weapon: The Evolution of AI Misuse

AI itself isn’t malicious.

The threat comes from how easily advanced capabilities can be repurposed.

Modern AI systems including techniques related to generative adversarial networks (GANs) in AI—can generate convincing text, clone voices, create realistic images and video, analyze massive datasets, and automate decision-making at scale.

When placed in the wrong hands, those same strengths become force multipliers for attackers.

What once required large teams, deep technical expertise, and time-consuming effort can now be automated, accelerated, and scaled with AI-driven tools.

AI-Powered Social Engineering and Phishing

Social engineering has always relied on psychology. AI makes it far more precise and convincing.

Attackers now use AI to:

- Generate highly personalized phishing emails that match a victim’s writing style, role, and context

- Eliminate grammatical errors and awkward phrasing that once exposed scams

- Rapidly test and refine messages to improve success rates

Some campaigns use AI to analyze social media activity, breached data, and public records to tailor messages that feel legitimate and urgent. The result is phishing that bypasses both human skepticism and traditional email filters.

Deepfakes and Identity Manipulation

One of the most alarming AI cybersecurity threats is the rise of AI-generated deepfakes, many of which are influenced by advances in generative adversarial networks (GANs) within AI research.

Voice cloning and synthetic video can now convincingly impersonate executives, vendors, or employees.

In several real-world cases, attackers have used AI-generated voices to:

- Authorize fraudulent wire transfers

- Bypass internal approval processes

- Manipulate help desks into resetting credentials

As these technologies improve, visual or audio verification alone will no longer be a reliable trust signal.

Automated Malware, Exploit Development, and Adversarial AI Techniques

AI is also lowering the barrier to technical attacks, a hallmark of adversarial AI being applied to offensive cybersecurity operations.

Threat actors are using AI to:

- Write and obfuscate malware code

- Identify vulnerabilities faster by scanning and analyzing systems at scale

- Adapt malware behavior in real time to evade detection

This automation enables faster attack cycles and more frequent mutation, making signature-based defenses increasingly ineffective.

Smarter, Faster Reconnaissance

Before launching an attack, adversaries must understand their target. AI dramatically accelerates this phase.

By processing large volumes of open-source intelligence, leaked credentials, and network data, AI can:

- Identify high-value targets within an organization. Map relationships between employees, vendors, and systems

- Prioritize attack paths with the highest probability of success

What once took weeks of manual research can now happen in minutes.

AI vs. AI: The Adversarial AI Arms Race in Cybersecurity

Defenders are not standing still. AI is also being used to improve threat detection, behavior analysis, and automated response. However, this has created an arms race where both attackers and defenders are leveraging similar technologies.

The difference is incentives. Attackers only need to succeed once. Defenders must succeed every time.

This imbalance makes AI-driven attacks particularly dangerous for small and mid-sized organizations that lack mature security programs.

Why Small Businesses Are Especially at Risk

AI-powered attacks scale cheaply. That means attackers no longer need to focus only on large enterprises.

Small businesses often face:

- Limited security staffing and monitoring

- Overreliance on trust-based processes

- Inconsistent employee security training

- Gaps in identity and access controls

AI allows attackers to exploit these weaknesses efficiently and repeatedly.

Preparing for an AI-Driven Threat Landscape

Organizations don’t need to become AI experts overnight, but they do need to adapt.

Key steps include:

- Strengthening identity verification beyond voice or email alone

- Implementing multi-factor authentication everywhere possible

- Training employees to recognize sophisticated social engineering

- Monitoring for unusual behavior, not just known threats

- Regularly reviewing and testing incident response plans

Most importantly, businesses must assume that attackers are using AI and plan accordingly.

Conclusion

AI is no longer just a defensive asset.

As AI cybersecurity threats grow, adversarial AI techniques including those inspired by generative adversarial networks in AI—are actively reshaping how cyberattacks are planned, executed, and scaled. It is an adversarial capability that is actively reshaping how cyberattacks are planned, executed, and scaled.

Organizations that continue to rely on outdated assumptions about attacker sophistication will find themselves increasingly exposed.

Those that recognize AI as both a tool and a threat will be better prepared for today’s risks. Adjusting security strategies accordingly positions them to withstand what comes next.

The question is no longer whether AI will be used against you, but whether you are prepared for it.

Worried about AI-driven Cyber Threats Targeting your Business?

If you’re a small business and not sure where your security gaps are, now is the time to act.

Call Silverback Consulting at (719) 452-2205to speak with a cybersecurity expert or download our Cybersecurity Guide for Small Businesses to understand the protections you should already have in place

Silverback Consulting

303 South Santa Fe Ave

Pueblo, CO 81003

support@silverbackconsulting.us

“Leadership in the I.T. Jungle”